Unlock the secrets of your code with our AI-powered Code Explainer. Take a look!

Say you're tasked to analyze some website to check for its performance and you need to extract total files required to download for the web page to properly load, in this tutorial, I will help you accomplish that by building a Python tool to extract all script and CSS file links that are linked to a specific website.

We will be using requests and BeautifulSoup as an HTML parser, if you don't have them installed on your Python, please do:

pip3 install requests bs4Let's start off by initializing the HTTP session and setting the User agent as a regular browser and not a Python bot:

import requests

from bs4 import BeautifulSoup as bs

from urllib.parse import urljoin

# URL of the web page you want to extract

url = "http://books.toscrape.com"

# initialize a session

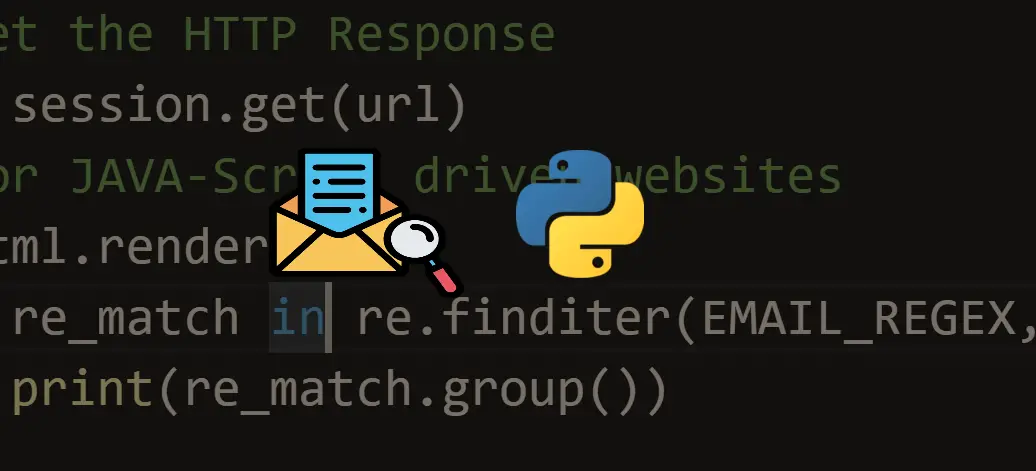

session = requests.Session()

# set the User-agent as a regular browser

session.headers["User-Agent"] = "Mozilla/5.0 (X11; Linux x86_64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/44.0.2403.157 Safari/537.36"Now to download all the HTML content of that web page, all we need to do is call session.get() method, which returns a response object, we are interested just in the HTML code, not the entire response:

# get the HTML content

html = session.get(url).content

# parse HTML using beautiful soup

soup = bs(html, "html.parser")Now we have our soup, let's extract all script and CSS files, we use soup.find_all() method that returns all the HTML soup objects filtered with the tag and attributes passed:

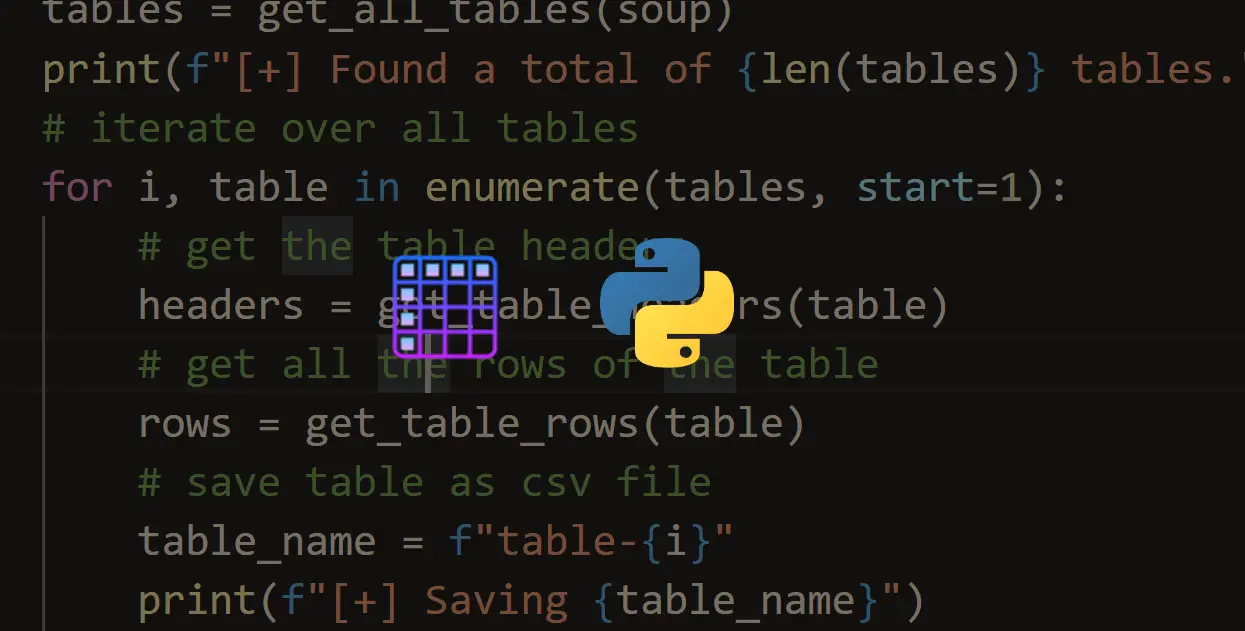

# get the JavaScript files

script_files = []

for script in soup.find_all("script"):

if script.attrs.get("src"):

# if the tag has the attribute 'src'

script_url = urljoin(url, script.attrs.get("src"))

script_files.append(script_url)So, basically we are searching for script tags that have the src attribute, this usually links to Javascript files required for this website.

Similarly, we can use it for extract CSS files:

# get the CSS files

css_files = []

for css in soup.find_all("link"):

if css.attrs.get("href"):

# if the link tag has the 'href' attribute

css_url = urljoin(url, css.attrs.get("href"))

css_files.append(css_url)As you may know, CSS files are within href attributes in link tags. We are using urljoin() function to make sure the link is an absolute one (i.e with full path, not a relative path such as /js/script.js).

Finally, let's print the total script and CSS files and write the links into seperate files:

print("Total script files in the page:", len(script_files))

print("Total CSS files in the page:", len(css_files))

# write file links into files

with open("javascript_files.txt", "w") as f:

for js_file in script_files:

print(js_file, file=f)

with open("css_files.txt", "w") as f:

for css_file in css_files:

print(css_file, file=f)Once you execute it, 2 files will appear, one for Javascript links and the other for CSS files:

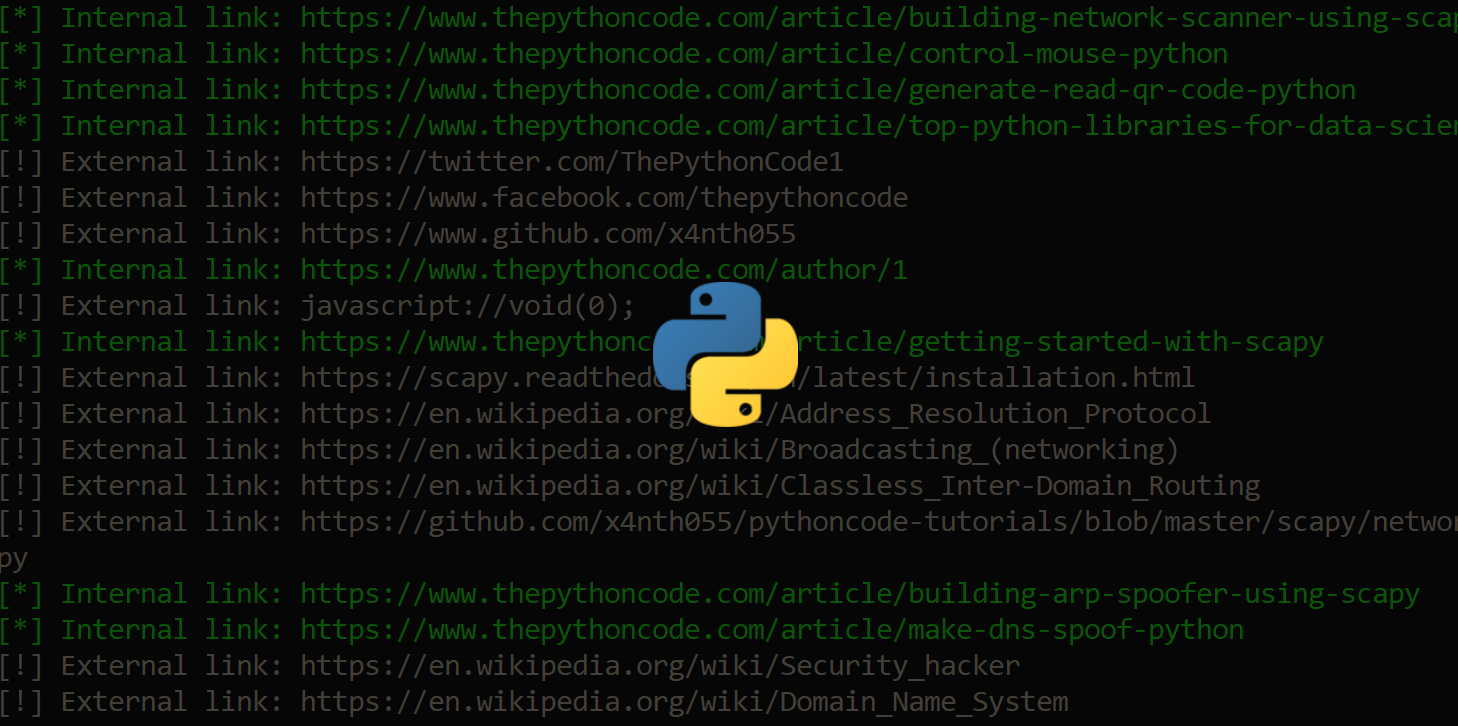

css_files.txt

http://books.toscrape.com/static/oscar/favicon.ico

http://books.toscrape.com/static/oscar/css/styles.css

http://books.toscrape.com/static/oscar/js/bootstrap-datetimepicker/bootstrap-datetimepicker.css

http://books.toscrape.com/static/oscar/css/datetimepicker.cssjavascript_files.txt

http://ajax.googleapis.com/ajax/libs/jquery/1.9.1/jquery.min.js

http://books.toscrape.com/static/oscar/js/bootstrap3/bootstrap.min.js

http://books.toscrape.com/static/oscar/js/oscar/ui.js

http://books.toscrape.com/static/oscar/js/bootstrap-datetimepicker/bootstrap-datetimepicker.js

http://books.toscrape.com/static/oscar/js/bootstrap-datetimepicker/locales/bootstrap-datetimepicker.all.jsAlright, in the end, I encourage you to further extend this code to build a sophisticated audit tool that is able to identify different files, their sizes and maybe can make suggestions to optimize the website !

As a challenge, try to download all these files and store them in your local disk (this tutorial can help).

I have another tutorial to show you how you can extract all website links, check it out here.

Furthermore, if the website you're analyzing accidentally bans your IP address, you need to use a proxy server in that case.

Related: How to Automate Login using Selenium in Python.

Happy Scraping ♥

Loved the article? You'll love our Code Converter even more! It's your secret weapon for effortless coding. Give it a whirl!

View Full Code Convert My Code

Got a coding query or need some guidance before you comment? Check out this Python Code Assistant for expert advice and handy tips. It's like having a coding tutor right in your fingertips!